Competitive intelligence, the "what else", is one of the core tenets of Web Analytics 2.0.

The reason is simple: The ecosystem within which you function on the web contains mind blowing data you can use to become better.

Your traffic grew by 6% last year, what was your competitor's growth rate? 15%. Feel better? : ) When should you start doing paid search advertising for tours to Italy for 2011? In May 2010 (!). What is your "share of search" in the netbook segment compared to your biggest competitor? 9 points higher, now you deserve a bonus! How many visitors to your site go visit your competitor's site right after coming to yours? 39%, good god! Where to do display advertisements to ensure you get in front of men considering proposing to their girlfriends (or boyfriends)? Go beyond targeting men between the age of 28 and 34, use search behavior and be really smart.

I am just scratching the surface of what's possible.

It is simply magnificent what you can do with freely available data on the web about your direct competitors, your industry segment and indeed how people behave on search engines and other websites.

The secret to making optimal use of CI data lies in one single realization: You must ensure you understand how the data you are analyzing is collected.

Not all sources of CI data are created equal. It is key that before you use the data that comScore or Nielsen or Google or HitWise or Compete or your brother-in-law shove into your face that you understand where the data comes from.

Once you understand that you choose: 1. The best source possible that is 2. The right answer for the question you are asking (which implies you have to be flexible!).

Here are the sources of competitive intelligence data.

#1: Toolbar Data.

Toolbars are add-on's that provide additional functionality to web browsers, such as easier access to news, search features, and security protections. They are available from all the major search engines such as Google, MSN, Yahoo! as well as from thousands of other sources.

These toolbars also collect limited information about the browsing behavior of the customers who use them, including the pages visited, the search terms used, perhaps even time spent on each page, and so forth. Typically, data collected is anonymous and not personally identifiable information (PII).

After the toolbars collect the data, your CI tool then scrubs and massages the data before presenting it to you for analysis. For example, with Alexa, you can report on traffic statistics (such as rank and page views), upstream (where your traffic comes from) and downstream (where people go after visiting your site) statistics, and key-words driving traffic to a site.

Millions of people use widely deployed toolbars, mostly from the search engines, which makes these toolbars one of the largest sources of CI data available. That very large sample size makes toolbar data a very effective source of CI data, especially for macro website traffic analysis such as number of visits, average duration, and referrers.

Search engine toolbars are a ton more popular which is the key reason that other toolbars data sources, such as Alexa, are not useful any more (the data is simply not good enough).

Toolbar Data Bottom-line: Toolbar data is typically not available by itself. It is usually a key component in tools that use a mix of sources to provide insights.

#2: Panel Data.

Panel data is another well-established method of collecting data. to gather panel data, a company may recruit participants to be in a panel, and each panel member installs a piece of monitoring software. The software collects all the panel’s browsing behavior and reports it to the company running the panel. Additionally the person is also required to self report demographic, salary, household members, hobbies, education level and other such detailed information.

Varying degrees of data are collected from a panel. At one end of the spectrum, the data collected is simply the websites visited, and at the other end, the monitoring software records the credit cards, names, addresses, and any other personal information typed into the browser.

Panel data is also collected when people unknowingly opt into sending their

data. Common examples are a small utility you install on your computer to get the weather or an add-on for your browser to help you auto complete forms. in the unreadable terms of service you accept, you agree to allow your browsing behavior to be recorded and reported.

data. Common examples are a small utility you install on your computer to get the weather or an add-on for your browser to help you auto complete forms. in the unreadable terms of service you accept, you agree to allow your browsing behavior to be recorded and reported.

Panels can have a few thousand members or several hundred thousand.

You need to be cautious about three areas when you use data or analysis based on panel data:

Sample bias: Almost all businesses, universities, and other institutions ban monitoring software because of security and privacy concerns. Therefore, most monitored behavior tends to come from home users. Since usage during business hours forms a huge amount of web consumption, it is important to know that panel data is blind to this information.

Sampling bias: People are enticed to install monitoring software in exchange for sweep-stakes entries, downloadable screensavers and games, or a very small sum of money (such as $3 per month). This inclination causes a bias in the data because of the type of people who participate in the panel. This is not itself a deal breaker, but consider whose behavior you want to analyze vs. who might be in the sample.

Web 2.0 challenge: Monitoring software (overt or covert) was built when the Web was static and page-based. The advent of rich experiences such as video, Ajax, and Flash means no page views, which makes it difficult for monitoring software to capture data accurately. Some monitoring software companies have tried to adapt by asking companies to embed special beacons in their website experiences, but as you can imagine, this is easier said than done (select few want to do it, then do they do it well and how do you compare companies that did or did not beaconify?).

The panel methodology is based on the traditional television data capture model. In a world that is massively fragmented, panels face a huge challenge in collecting accurate and complete (or even representative) data. A rule of thumb I have developed is if a site gets more than 5 million unique visitors a month, then there is a sufficient signal from panel-based data.

Panel Data Bottom-line: For companies such as comScore and Nielsen panel data has been a primary source for competitive reporting they provide their clients. But because of the methodology's inherent limitations, recently panel data is augmented by other sources of data before it is provided for analysis (including for a subset of data you'll get from comScore and Nielsen – please check & clarify before you use the data).

#3: ISP (Network) Data.

We all get our internet access from Internet Service Providers (ISP's), and as we surf the Web, our requests go through the servers of these ISP's to be stored in server log files.

The data collected by the ISP consists of elements that get passed around in URLs, such as sites, page names, keywords searched, and so on. The ISP servers can also capture information such as browser types and operating systems.

The size of these isps translates into a huge sample size.

For example, Hitwise which chiefly relies on isp data, has a sample size of 10 millionpeople in the United states and 25 million worldwide. Such a large sample size reduces sample bias (surprise!). There are also geographically focused competitive intelligence solutions, like Netsuus in Spain, that provide analysis from excellent locally sourced data.

The other benefit of ISP data is that the sampling bias is also reduced; since you and I don’t have to agree to be monitored, our ISP simply collects this anonymous data and then sells it to third-party sources for analysis.

ISP's typically don’t publicize that they sell the data, and companies that purchase that data don’t share this information either. So, there is a chance of some bias. Ask for the sample size when you choose your ISP-based CI tool, and go for the biggest you can find.

ISP Data Bottom-line: The largest samples of CI data currently available comes from ISP data (in tools like Hitwise and Compete). Though both tools (and other smart ones like them) increasingly use a small sample of panel data and even some small amount of purchased toolbar data.

#4: Search Engine Data.

Our queries to search engines, such as Bing, Google, Yahoo!, and Baidu, are logged by those search engines, along with basic connectivity information such as IP address and browser version. In the past, analysts had to rely on external companies to provide search behavior data, but increasingly search engines are providing tools to directly mine their data.

You can use search engine data with a greater degree of confidence, because it comes directly from the search engine (doh!). Remember, though, that the data is specific to that search engine—and because each search engine has distinct user base, it is not wise to apply lessons from one to another.

With that mild warning here are some amazing tools. . . .

Similar tools are available from Microsoft: Entity Association, Keyword Group Detection, Keyword Forecast, and Search Funnels (all at Microsoft adCenter Labs).

Search Engine Data Bottom-line:Search engine data tends to be the primary, and typically the best source you can find, for search data analysis. If you are analyzing data for your SEO or PPC campaigns and you find the search engine providing the data then you should instantly embrace it and immediately propose marriage!

#5: Benchmarks from Web Analytics Vendors.

Web analytics vendors have lots of customers, which means they have lots of data. Many vendors now aggregate this real customer data and present it in the form of benchmarks that you can use to index your own performance.

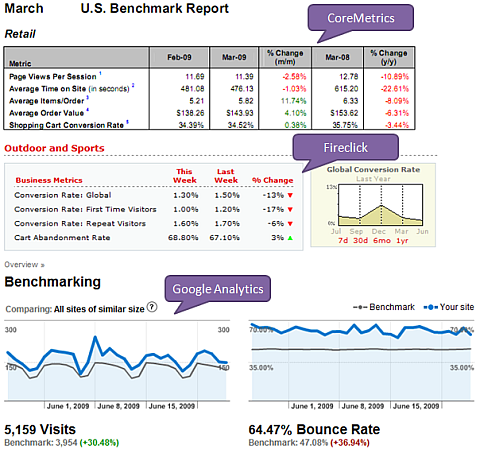

Benchmarking data is currently available from Fireclick, Coremetrics, and Google Analytics. Often, as is the case with Google Analytics, customers have to explicitly opt in their data into this benchmarking service. But this is not always true for all vendors, please check with yours.

Both Fireclick and Coremetrics provide benchmarks related to conversion rates, cart abandonment, time on site, and so forth. Google Analytics provides benchmarks for visits, bounce rates, page views, time on site, and % new visits.

In all three cases, you can compare your performance to specific vertical markets (for example, retail, apparel, software, and so on), which is much more meaningful.

The cool benefit of this method is that websites directly report very accurate data, even if the web analytics vendor makes that data anonymous. The downside is that your competitors might not all use the same tool as you; therefore, you are comparing your actual performance against the actual performance of a subset of your competitors.

With data from vendors, you must be careful about sample size, that is, how

many customers the web analytics vendor has. If your web analytics vendor has just 1,000 customers and it is producing benchmarks in 15 industry categories, it might be a hit or a miss in terms of how valuable / representative the benchmarks are.

many customers the web analytics vendor has. If your web analytics vendor has just 1,000 customers and it is producing benchmarks in 15 industry categories, it might be a hit or a miss in terms of how valuable / representative the benchmarks are.

WA Vendor Data Bottom-line: Data from web analytics vendors comes from their clients, so it is real data. The client data is anonymous, so you can’t do a direct comparison between you and your arch enemy; rather, you’ll compare yourself to your industry segment (which is perfectly ok).

#6: Self-reported Data.

It is common knowledge that some methods of data collection, such as panel-

based, do not collect data with the necessary degree of accuracy. A site’s own analytics tool may report 10 million visits, and the panel data may report 6 million. To overcome this issue, some vendors, such as Quantcast and Google’s Ad Planner, allow websites to report their own data through their tools.

based, do not collect data with the necessary degree of accuracy. A site’s own analytics tool may report 10 million visits, and the panel data may report 6 million. To overcome this issue, some vendors, such as Quantcast and Google’s Ad Planner, allow websites to report their own data through their tools.

For sites that rely on advertising, the data used by advertisers must be as

accurate as possible; hence, the sites have an incentive to share data directly. If your competitors publish their own data through vendors such as Google’s Ad planner or Quantcast, then that is probably the cleanest and best source of data for you.

accurate as possible; hence, the sites have an incentive to share data directly. If your competitors publish their own data through vendors such as Google’s Ad planner or Quantcast, then that is probably the cleanest and best source of data for you.

One thing to be cautious about when you work with self-reported data. Check the definitions of various metrics. For example, if you see a metric called Cookies, find out exactly what that metric means before you use the data.

Self-Reported Data Bottom-line: Because of its inherent nature, self-reported data tends to augment other sources of data provided by tools such as Ad Planner or Quantcast. It also tends to be the cleanest source of data available.

#7: Hybrid Data (/All Your Base Are Belong To Us).

Competitive intelligence vendors are observing you from the outside. Any single source, toolbar or panels or isp or tags or spyware etc, will have its own bias / limitation.

Some, smart, vendors now use multiple sources of data to augment the data set they started their life with.

The first method is to append the data. This is what's happening in the case of Quantcast and Google Ad Planner, in both cases they have their own source of data to which your self reported data is added. The resulting reports are "awesomely good".

The second method is to put many different sources (say toolbar, panel, isp) into a blender, churn at high speed, throw in a pinch of math and a dash or correction algorithms, and – boom! – you have one "awesomely good" number. A good example of this is Compete or the DoubleClick Ad Planner.

Google's Trends for Websites is another example of a tool that uses hybrid data for its reporting (see answer #2 here).

The benefit of using hybrid methodology is that the vendor can plug in any gaps

that might exist between different sources.

that might exist between different sources.

The challenge is that it is much harder to peel back the onion and understand some of the nuances and biases in the data (sometimes mildly frustrating to analysis ninja's such as myself!).

Hence, the best-practice recommendation is to forget about the absolute numbers and focus on comparing trends; the longer the time period, the better.

Hybrid Data Bottom-line: As the name implies, hybrid data contains data from many different sources and is increasingly the most commonly used methodology. It will probably be the category that will grow the most because frankly in context others look rather sub optimal.

[Update:] #8: External Voice of Customer Data.

This is one often overlooked source of competitive intelligence (/benchmarking) data. There are several ways to use Voice of Customer data.

For starters various companies such as iPerceptions (like CoreMetrics, Google Analytics, Fireclick above for clickstream) publish Customer Satisfaction & Task Completion Rate (my most beloved metric!) numbers for various industries.

If I am in the internet retail game I can use these benchmarks to compare my performance:

Or I can dig deeper and compare my performance by the Primary Purpose segments:

Both of the above are from the iPerceptions Q4 2009 Ecommerce Benchmark report. You'll find other reports in the Resource Center.

With other sources like the ACSI (American Customer Satisfaction Index) you can get a big deeper. Just choose an industry from their site, I choose Internet Travel, and bam!

If I am Travelocity I am wondering what in the name of Jebus did the other guys do last year to have all those gains in Satisfaction where I got a zero.

And really what are those guys at Priceline eating! 5.6 points improvement just last year, 15 points over the last 9! Sure they started with a smaller number but still.

What can I, sad Travelocity, learn from them? From others?

In both cases above the intelligence came from a third party doing the research and giving you data for free.

But you can also commission studies, from the two companies above or one of thousands like them on the web.

Or you can do it yourself.

I just met a Top Web Company the other day and I spent $300 on 20 remote usability participants to go to the website of Top Web Company and their main competitor. I gave the usability participants the exact same tasks to do on both sites.

The scores were most illuminating (and embarrassing for Top Web Company). It allowed me to (without working at either company) collect competitive intelligence about how each were delivering against: 1. Task Completion Rate and 2. Customer Satisfaction.

You could collect your own competitive intel using UserTesting.Com, User Zoom,Loop11, or one of many other tools.

Or for even "cheaper" (and a bit less impactful) insights you can use something delightful like www.fivesecondtest.com. Upload how your pages, upload those of your competitors (or complete strangers not in your industry) and learn from real users which designs they prefer and what works best.

Hybrid Data Bottom-line: I love customers, I love Task Completion Rate as a powerful metric, I love VOC. Above are three simple ways in which you can collect competitive intelligence using Voice of Customer data and drive action perhaps even faster than the first seven methods!

[PS: Nothing, absolutely nothing, works better to win against HiPPOs than using competitors and customers!]

The Optimal Competitive Analysis Process.

A lot of data is available about your industry or your competitors that you can

use to your benefit.

use to your benefit.

Here is the process I recommend for CI data analysis:

1. Ensure that you understand exactly how the data is collected.

2. Understand both the sample size and sampling bias of the data reported to you. Really spend time on this.

3. If steps 1 and 2 pass the sniff test, use the data.

Don’t skip the steps, and glory will surely be yours.

No comments:

Post a Comment